This website uses cookies so that we can provide you with the best user experience possible. Cookie information is stored in your browser and performs functions such as recognising you when you return to our website and helping our team to understand which sections of the website you find most interesting and useful.

A well designed and flashy looking website can be attractive to the eyes but it cannot drive visible amount of traffic if it is not accessible to search engines. Therefore the first step of a well-planned SEO strategy is to help robots of search engines index your website and thereby introducing it in the battle of search engine rankings and gaining more visitors.

Here I am discussing a few such accessibility parameters that will help you to optimize your website in an effective way. Once you implement them, you will noticeably find the difference in how search engines are now indexing your website and crawling each link in a better way.

Use of Canonical URL

Although addresses like https://weplugins.com/ and https://weplugins.com are considered the same they are completely different sets of URL which tend to generate diverse content. But if this fact is neglected, it can render duplicate content issues and adversely affect your rankings. The concept of canonical URL is used to let Google index only one of the preferred URLs.

URL Canonicalization can be implemented by redirecting duplicate URLs to the canonical URL by specifying a 301 HTTP redirect in the .htaccess file when using an Apache Server or the Administrative Console while working on IIS Web Server. This will point multiple URLs towards the same content rather than same content pointing to various URLs. Other than the 301 redirect you can also use the canonical tag on the page with duplicate content to influence Google index your preferred page. For example, we have two similar pages:

https://www.yourexamplesite.com/canonical (canonical page)

https://www.yourexamplesite.com/duplicate (duplicate page)

To refer the canonical page in the above case, we use the following canonical tag within the <head> tag of the duplicate page

<link rel="canonical" href="https://www.yourexamplesite.com/canonical"/>

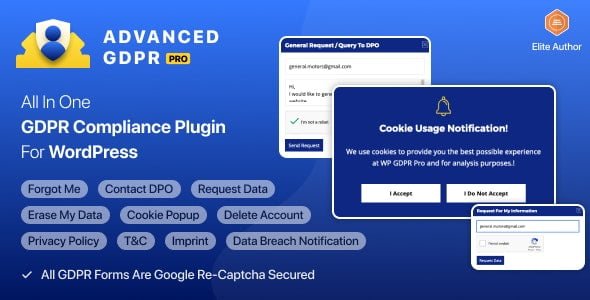

WP GDPR PROMake your website GDPR compliant with just a few clicks— no legal or technical knowledge required.

Get GDPR Compliant - Download Now

|

Use the Robot Meta Tag

The robot meta tag is an HTML tag used within the section of the page that tells the search engine whether to index the website and crawl the individual links or not.

Use the robots meta tag to control how search engines interact with your site. By setting specific values, you can decide if a page should be indexed or if links should be crawled.

index, follow</strong >: Allows the page to be indexed and links to be crawled (default behavior).

<meta name="robots" content="index, follow">

noindex, follow: Prevents the page from being indexed, but still allows links to be crawled.

<meta name="robots" content="noindex, follow">

index, nofollow: Indexes the page but prevents links from being crawled.

<meta name="robots" content="index, nofollow">

noindex, nofollow: Prevents both indexing and link crawling.

<meta name="robots" content="noindex, nofollow">

Importance of robots.txt File

This file plays a similar role as that of the robot meta tag. It is capable of restricting the search engine robots to access the specified pages of your website. The robots.txt file is present nowhere else than the root directory of the web server of your website. Popular search engines like Google, Bing, and Yahoo obey its instructions and do not index the pages it blocks but some spammers may also avoid it. Thus Google recommends to password protect the confidential information.

The robots.txt file can either be created using either the Google Webmaster Tools or manually.

The robots.txt file contains two key directives:

- User-agent: Specifies the search engine crawler the rule applies to.

- Disallow: Indicates the URL path you want to block from being crawled.

Allow all search engines to crawl your entire site:

User-agent: * Disallow:

Block all search engines from accessing the entire site:

User-agent: * Disallow: /

Block a specific directory and all its contents:

User-agent: * Disallow: /directory-name/

These directives form a single entry, and you can create multiple entries to set different access permissions as needed.

Create XML Sitemap

Links to a website are crawled through links URLs within the website or through other web pages. An XML Sitemap is a convenient way to help search robots index your website and crawl each page that otherwise would have been left out in the normal crawling process. This file lists all the URLs within your website including the metadata about the URL.

The required XML tags used in creating a sitemap are:

<?xml version="1.0" encoding="UTF-8"?>

<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<url>

<loc>http://www.instance.com/</loc>

<lastmod>2013-10-04</lastmod>

<changefreq>monthly</changefreq>

<priority>0.7</priority>

</url>

</urlset>

Here <lastmod> is the last modification date in the YYYY-MM-DD format.<changefreq> is the frequency by which the content of the page changes like always, daily, weekly, never etc.

Once a sitemap is created it can be uploaded to your site and then submitted to the search engines like Google via the webmaster tools. You can also add the following line in the robots.txt file with the path to the sitemap so as to submit the sitemap:

Sitemap: http://example.com/sitemap_location.xml

Avoid the use of Flash Content

Although GoogleBot reads and crawls flash content like other content it cannot index bi-directional languages like Hebrew and Arabic in flash files. Not only this, some search engines are entirely incapable of reading flash at all. Flash can also prove to be non-friendly for users using old or non-standard browsers, low bandwidth connections and the ones with visual impairments that need screen readers and therefore for the widespread reach of content it is recommended to replace flash with HTML.

In the case of javascript, use a <noscript> tag to ensure same content as contained in the JavaScript, and this content is shown to users who do not have JavaScript enabled in their browser.

Use of Title Tag

The title of your page is displayed in the search engine results, therefore, make sure to use a title that is entirely relevant to your content and no longer than 60 characters.

Optimized Meta Description

Similar to a meta title, a meta description is also displayed in the search engine results providing a brief idea of what your web page is all about. An appropriate meta description can result in higher CTRs in the search results. So it is important to summarize it with relevant keywords. Use a unique description for each page. The length of an ideal meta description is 130 to 150 and a longer description may be truncated.

Play Safe with Keywords

Keywords are no longer considered in search ranking. Use of meta keywords have been in a conflict for a long time and now are considered spam indicator. Moreover, they also hint your competitors about the keywords that you aim. So it is strongly recommended to eliminate the usage of meta keywords. However, you can very conveniently use them at a generous no. of places including the meta title, meta description, alt tags, anchor tags and content of your web page but remember an ideal keyword density is 3%-7% and more than that may get your site banned from search engines.

Use Image alt Tags

Generally, search engines are text based and they cannot read images but they determine its importance and relevancy through their textual description within the tag. The image tag is also important because it appears on the browser when the image does not get displayed. Therefore using of img tag is strongly advisable for all images on your website.

Conclusion

If you tackle these small fixes, you’ll start seeing improvements in your site’s search rankings without needing a big investment. Think of your site like your own child—it needs a little attention and care from time to time to really thrive.

Good Luck with your Website!

WP Age Gate ProEasily add age verification to your site—no coding required, just a quick setup.

Add Age Verification - Get Started

|

Explore the latest in WordPress

Trying to stay on top of it all? Get the best tools, resources and inspiration sent to your inbox every Wednesday.